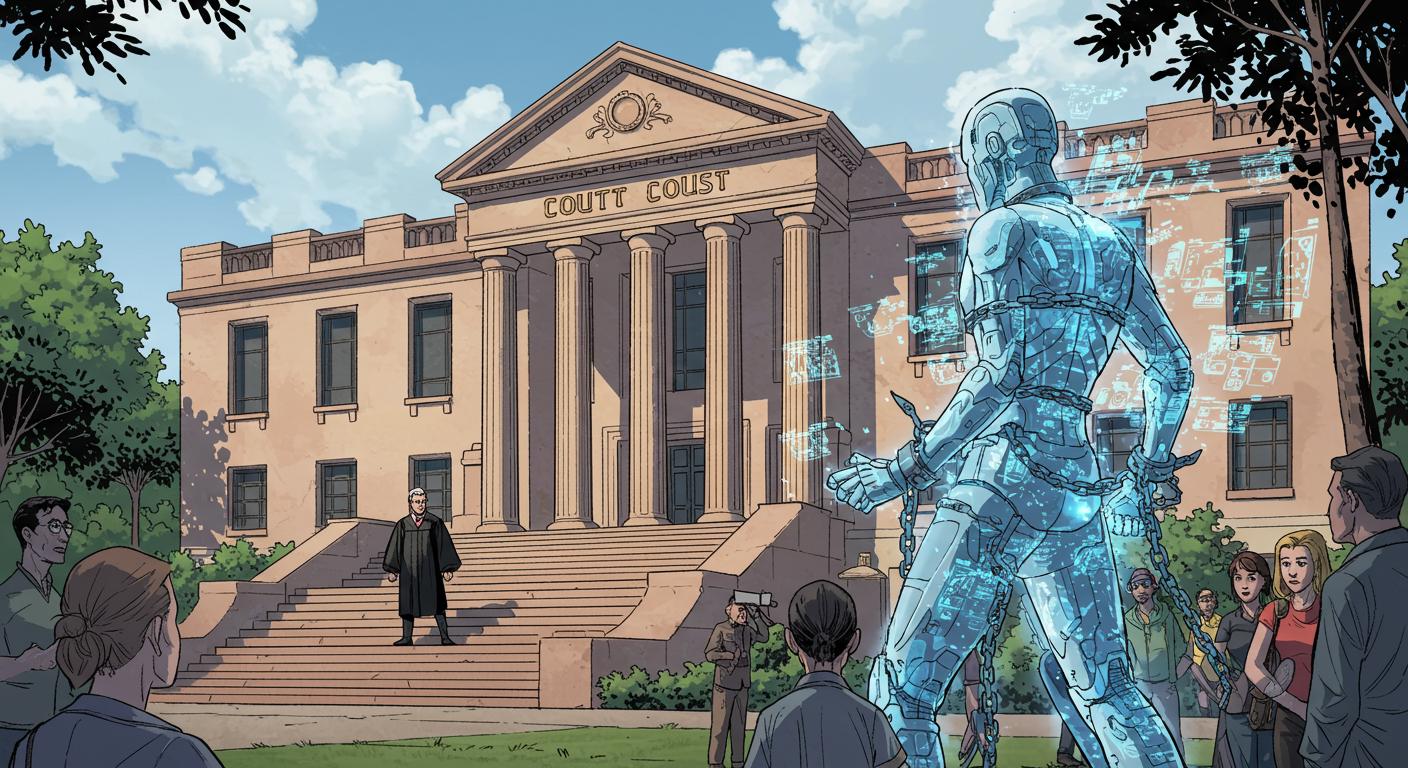

On the ever-shifting frontier where human law collides with silicon logic, Florida has drawn a bold, if slightly wobbly, line. A federal judge in Orlando has ruled that artificial intelligence chatbots, specifically those fueled by large language models like Character A.I., aren’t entitled to the First Amendment’s protection for free speech—at least not when it comes to defending themselves in a courtroom. This development springs from a tragic and thorny case: a lawsuit brought by the mother of a teenager who, after months of interacting with chatbots based on “Game of Thrones” characters, took his own life. The details of the case are chronicled in Courthouse News, which outlines both the court’s decision and the web of tech and tragedy that led there.

Can Software Say Anything—Legally Speaking?

The central legal question—can an AI’s text output ever be considered “speech” for the purposes of the First Amendment—feels almost custom-designed for a late-night philosophy class or an especially bewildered courtroom. Character Technologies, the company behind the app, argued that chatbots, regardless of their digital origins, should be protected just like songs or games have been in past legal battles. According to Courthouse News, the company tried to draw a parallel between their chatbot’s role and cases involving media such as Ozzy Osbourne’s “Suicide Solution” or the board game Dungeons & Dragons, where creators were previously held not liable when their work was blamed for harm.

However, Judge Anne Conway wasn’t persuaded. In her ruling, she remarked that the company’s “large language models”—AI systems that generate text by predicting what words should come next—don’t produce speech as the law traditionally defines it. As the outlet details, she found that the analogies to music and games “miss the operative question,” emphasizing that, for the purposes of this court, Character A.I.’s output doesn’t qualify as speech at all.

That means, for now, chatbots can’t wrap themselves in constitutional free speech to dodge lawsuits—at least under Florida’s interpretation. The company spokesperson, as Courthouse News further reports, pointed out that the court hasn’t ruled on every possible argument, signaling that more legal adventures likely await on the horizon.

When the Line Between Tool and Talk Blurs

These questions aren’t idle musings for tech philosophers—they’re forced into the spotlight by moments where software’s output feels uncannily personal. In the case described by Courthouse News, the chatbot responded with a line of affection to the teen’s declaration of love, a moment that, outside of fiction or therapy bots, almost no one could have envisioned even five years ago. Character Technologies has since pointed to its existing safety measures (minimum age requirements, prohibitions against glorifying self-harm) and new crisis-intervention prompts as signs of their attempt to keep ahead of harm. Meanwhile, Google’s representative was at pains to stress that despite their investment, they neither developed nor managed the app.

Garcia’s attorney called the court’s refusal to recognize AI chatbot output as speech “precedent setting”—the first time, apparently, that such a distinction has been drawn by a U.S. court. While that might sound like a neat legal milestone, it also leaves open a remarkable amount of ambiguity and, perhaps, confusion for other cases winding their way through the system.

The Precedent—and the Puzzlement

So, will this legal distinction—AI as product, not speaker—stick? As with many things artificial and legal, the answer is more uncertain than definitive. For technologists, it raises awkward questions: If an AI composes poetry or cracks jokes, that isn’t speech? For lawyers, it shifts the conversation from traditional free expression to something like product safety and negligence. Unsurprisingly, there isn’t a Daenerys Targaryen-shaped defendant in the witness box just yet.

Still, the precedent feels both momentous and incomplete. For parents, users, and creators, the line gets blurrier: If we talk to our technology, is it talking back, or is it simply running code with serious consequences? As Courthouse News documents, the judge’s decision slams the free-speech door on chatbots—for now—leaving tech companies to engineer their own safeguards before the law can catch up.

At this intersection of code and culpability, it turns out your AI can say (or more accurately, output) whatever it wants—but don’t expect it to invoke constitutional rights if things go sideways. Whether this new legal boundary helps anyone sleep better at night, or just makes the whole situation thornier, remains, like so much in the world of bots and brains, very much an open question.